KNI, Kubernetes Native Infrastructure is one of the method deploying OpenShift baremetal with IPI (Installer Provided Infrastructure), yes you read it right, a baremetal automated deployment leveraging ironic baremetal provisioner.

At the time this blog is written, KNI is still in development phase and not General Available (GA).

The way how the baremetal bootstrap works is a little bit different from other IPIs. Notable differences:

- Provisioner node required to host bootstrap VM

We can use worker node that intended as worker node later for hosting temporarily provisioning node. Provisioning node required virtualization capabilities and same networking as other OCP nodes. Hence using this worker node is preferable since networking and requirement are exists without the need to setup another provisioner node.

- Bootstrap node is now hosting more supporting containers like ironic components, mariadb, and dnsmasq.

In this entry, we are going to deploy OpenShift 4.5.13 using KNI on top of libvirt lab environment.

High Level Architecture

Lab Prerequisites

- Create networking and VM as per below details. Do not power on those OCP VMs, installer will manage those nodes during bootstrapping.

- Two networking on libvirt host as below.

root ~ virsh net-list | egrep 'prov|bare'

baremetal-net active yes yes

provisioning-net active yes yes root ~ virsh net-dumpxml baremetal-net

<network connections='7'>

<name>baremetal-net</name>

<uuid>ffcfa3d5-4ce6-43ea-84d8-c03b5b10a103</uuid>

<forward mode='nat'>

<nat>

<port start='1024' end='65535'/>

</nat>

</forward>

<bridge name='virbr5' stp='on' delay='0'/>

<mac address='52:54:00:19:8f:63'/>

<domain name='baremetal-net'/>

<ip address='192.168.102.1' netmask='255.255.255.0'>

</ip>

</network>

root ~ virsh net-dumpxml provisioning-net

<network connections='6'>

<name>provisioning-net</name>

<uuid>8e8ed321-8f7b-4ba9-8bad-a5386d321bb4</uuid>

<forward mode='nat'>

<nat>

<port start='1024' end='65535'/>

</nat>

</forward>

<bridge name='virbr4' stp='on' delay='0'/>

<mac address='52:54:00:36:8b:ac'/>

<domain name='provisioning-net'/>

<ip address='192.168.101.1' netmask='255.255.255.0'>

</ip>

</network>

root ~

- One Helper node that will attached to the baremetal network to provide below services:

- DNS and DHCP for baremetal network

- Host virtualBMC for IPMI control for all OCP node VM.

- 4vCPU/8GB RAM.

- RHEL 8.2

- One provisioner node:

- RHCOS Image Cache Hosting

- First interface attached to provisioning network

- Second interface attached to baremetal network

- 8vCPU/12GB RAM

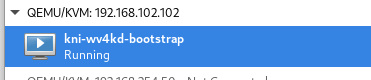

- Hosting baremetal VM (created by the installer later)

- RHEL 8.2

- 3 Master nodes VM and 2 worker nodes VM (lab usage resource only);

- First interface attached to provisioning network

- Second interface attached to baremetal network

- 8vCPU/16GB RAM.

- 50 GB Disk

Virtual Machine OS Configurations

| Hostname(kni.bytewise.my) | vCPU | Memory | Disk | OS Version |

| openshift-master-0 | 8 | 16GB | 50 GB | RHCOS 4.5 |

| openshift-master-1 | 8 | 16GB | 50 GB | RHCOS 4.5 |

| openshift-master-2 | 8 | 16GB | 50 GB | RHCOS 4.5 |

| openshift-worker-0 | 8 | 16GB | 50 GB | RHCOS 4.5 |

| openshift-worker-1 | 8 | 16GB | 50 GB | RHCOS 4.5 |

| provisioner | 8 | 12GB | 50 GB | RHEL 8.2 |

| kni-bastion (Helper) | 4 | 8GB | 20GB | RHEL 8.2 |

WARNING: We are running these VMs on the libvirt environment, provisioner node will be hosting a bootstrap VM, so it is CRITICAL for the provisioner VM to be able to do nested virtualization.

https://docs.fedoraproject.org/en-US/quick-docs/using-nested-virtualization-in-kvm/

Virtual Machine Network Configurations

| Hostname(kni.bytewise.my) | IP Type | MAC Address | Network | IP Address |

| openshift-master-0 | IPv4 | NIC1 – 52:54:00:d4:d4:37 NIC2 – 52:54:00:58:ef:db | NIC1 – provisioning-net NIC2 – baremetal-net | NIC1- Provided by Bootstrap NIC2- 192.168.102.103 |

| openshift-master-1 | IPv4 | NIC1 – 52:54:00:cb:88:27 NIC2 – 52:54:00:d8:3e:21 | NIC1 – provisioning-net NIC2 – baremetal-net | NIC1- Provided by Bootstrap NIC2- 192.168.102.104 |

| openshift-master-2 | IPv4 | NIC1 – 52:54:00:3f:fb:1c NIC2 – 52:54:00:9c:a2:25 | NIC1 – provisioning-net NIC2 – baremetal-net | NIC1- Provided by Bootstrap NIC2- 192.168.102.105 |

| openshift-worker-0 | IPv4 | NIC1 – 52:54:00:9a:45:68 NIC2 – 52:54:00:2a:08:51 | NIC1 – provisioning-net NIC2 – baremetal-net | NIC1- Provided by Bootstrap NIC2- 192.168.102.106 |

| openshift-worker-1 | IPv4 | NIC1 – 52:54:00:99:fc:52 NIC2 – 52:54:00:0c:57:1a | NIC1 – provisioning-net NIC2 – baremetal-net | NIC1- Provided by Bootstrap NIC2- 192.168.102.107 |

| provisioner | IPv4 | NIC1 – 52:54:00:3e:be:b6 NIC2 – 52:54:00:7c:b4:76 | NIC1 – provisioning-net NIC2 – baremetal-net | NIC1- Provided by Bootstrap NIC2- 192.168.102.102 |

| kni-bastion (Helper) | IPv4 | NIC1 – 52:54:00:df:a1:14 | NIC1 – baremetal-net | NIC1- 192.168.102.2 |

Helper Node Configurations

Ensure node is properly subscribed to the RHSM before proceeding to the next step.

Helper Node: DHCP

1. Install DHCP Server package:

[root@kni-bastion ~]# dnf install dhcp-server.x86_64 -y2. Populate /etc/dhcp/dhcpd.conf:

[root@kni-bastion ~]# cat /etc/dhcp/dhcpd.conf

ddns-update-style interim;

ignore client-updates;

authoritative;

allow booting;

allow bootp;

allow unknown-clients;

default-lease-time -1;

max-lease-time -1;

subnet 192.168.102.0 netmask 255.255.255.0 {

range 192.168.102.200 192.168.102.240;

option routers 192.168.102.1;

option domain-name-servers 192.168.102.2;

option ntp-servers time.unisza.edu.my;

option domain-search "kni.bytewise.my";

host provisioner { hardware ethernet 52:54:00:7c:b4:76; fixed-address 192.168.102.102; }

host openshift-master-0 { hardware ethernet 52:54:00:58:ef:db; fixed-address 192.168.102.103; }

host openshift-master-1 { hardware ethernet 52:54:00:d8:3e:21; fixed-address 192.168.102.104; }

host openshift-master-2 { hardware ethernet 52:54:00:9c:a2:25; fixed-address 192.168.102.105; }

host openshift-worker-0 { hardware ethernet 52:54:00:2a:08:51; fixed-address 192.168.102.106; }

host openshift-worker-1 { hardware ethernet 52:54:00:0c:57:1a; fixed-address 192.168.102.107; }

}

3. Start and enable dhcpd service:

[root@kni-bastion ~]# systemctl enable dhcpd --now

Created symlink /etc/systemd/system/multi-user.target.wants/dhcpd.service → /usr/lib/systemd/system/dhcpd.service.

[root@kni-bastion ~]# systemctl is-active dhcpd

activeHelper Node: DNS

1. Install Bind package:

[root@kni-bastion ~]# dnf install bind -y2. Configure /etc/named.conf:

[root@kni-bastion named]# cat /etc/named.conf

//

// named.conf

//

// Provided by Red Hat bind package to configure the ISC BIND named(8) DNS

// server as a caching only nameserver (as a localhost DNS resolver only).

//

// See /usr/share/doc/bind*/sample/ for example named configuration files.

//

options {

#listen-on port 53 { 127.0.0.1; };

listen-on-v6 port 53 { ::1; };

directory "/var/named";

dump-file "/var/named/data/cache_dump.db";

statistics-file "/var/named/data/named_stats.txt";

memstatistics-file "/var/named/data/named_mem_stats.txt";

secroots-file "/var/named/data/named.secroots";

recursing-file "/var/named/data/named.recursing";

allow-query { any; };

recursion yes;

dnssec-enable yes;

dnssec-validation yes;

managed-keys-directory "/var/named/dynamic";

pid-file "/run/named/named.pid";

session-keyfile "/run/named/session.key";

include "/etc/crypto-policies/back-ends/bind.config";

forwarders {8.8.8.8;};

};

logging {

channel default_debug {

file "data/named.run";

severity dynamic;

};

};

zone "." IN {

type hint;

file "named.ca";

};

include "/etc/named.rfc1912.zones";

include "/etc/named.root.key";

zone "kni.bytewise.my" IN {

type master;

file "kni.bytewise.my.db";

allow-update { none; };

};

zone "102.168.192.in-addr.arpa" IN {

type master;

file "102.168.192.in-addr.arpa";

};3. Create and configure zone file “kni.bytewise.my”:

[root@kni-bastion named]# cat /var/named/kni.bytewise.my.db

$TTL 1D

@ IN SOA dns.kni.bytewise.my. root.kni.bytewise.my. (

2019022400 ; serial

3h ; refresh

15 ; retry

1w ; expire

3h ; minimum

)

IN NS dns.kni.bytewise.my.

dns IN A 192.168.102.2

provisioner IN A 192.168.102.102

openshift-master-0 IN A 192.168.102.103

openshift-master-1 IN A 192.168.102.104

openshift-master-2 IN A 192.168.102.105

openshift-worker-0 IN A 192.168.102.106

openshift-worker-1 IN A 192.168.102.107

api IN A 192.168.102.108

*.apps IN A 192.168.102.109

cluster-ns IN A 192.168.102.110 4. Create and configure reverse zone record “102.168.192.in-addr.arpa”:

$TTL 1D

@ IN SOA dns.kni.bytewise.my. root.kni.bytewise.my. (

2019022400 ; serial

3h ; refresh

15 ; retry

1w ; expire

3h ; minimum

)

IN NS dns.kni.bytewise.my.

102 IN PTR provisioner.kni.bytewise.my.

103 IN PTR openshift-master-0.kni.bytewise.my.

104 IN PTR openshift-master-1.kni.bytewise.my.

105 IN PTR openshift-master-2.kni.bytewise.my.

106 IN PTR openshift-worker-0.kni.bytewise.my.

107 IN PTR openshift-worker-1.kni.bytewise.my.

108 IN PTR api.kni.bytewise.my.

110 IN PTR cluster-ns.kni.bytewise.my.5. Start and enable named service:

[root@kni-bastion ~]# systemctl enable named --now

Created symlink /etc/systemd/system/multi-user.target.wants/named.service → /usr/lib/systemd/system/named.service.

[root@kni-bastion named]# systemctl is-active named

active6. Perform a quick DNS test:

[root@kni-bastion named]# nslookup

> server 192.168.102.2

Default server: 192.168.102.2

Address: 192.168.102.2#53

> api.kni.bytewise.my

Server: 192.168.102.2

Address: 192.168.102.2#53

Name: api.kni.bytewise.my

Address: 192.168.102.108

> 192.168.102.108

108.102.168.192.in-addr.arpa name = api.kni.bytewise.my.

> 192.168.102.103

103.102.168.192.in-addr.arpa name = openshift-master-0.kni.bytewise.my.

>

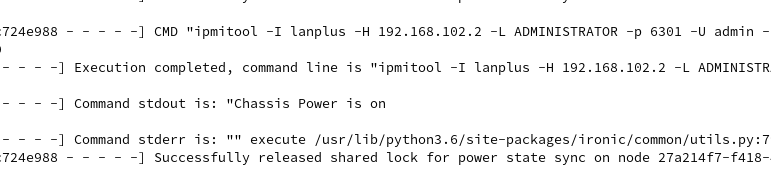

Helper Node: virtualBMC

kni-bastion node needs to connect to the libvirt host to perform VM control task via hosted VBMC using qemu+ssh connection string.

Python virtualBMC –> Libvirt Host (via SSH) –> Managed VM

1. Generate ssh key pair, this public key will be use to allowed kni-bastion to access libvirt via SSH as root user.

[root@kni-bastion ~]# ssh-keygen -t rsa2. Test root access to libvirt host using key-pair generated:

[root@kni-bastion ~]# ssh [email protected]

Last login: Wed Oct 14 14:02:12 2020 from 192.168.102.23. Install dependencies for building VBMC using pip3 command:

[root@kni-bastion ~]# dnf install python3-pip.noarch libvirt-devel gcc python3-devel ipmitool -y4. Install virtualBMC:

[root@kni-bastion ~]# pip3 install virtualbmcYou can use other pre-built VBMC RPM like the one available from OpenStack official repos.

5. Start the VBMC daemon and test the client command:

[root@kni-bastion ~]# vbmcd

[root@kni-bastion ~]# vbmc add openshift-master-0 --username admin --password password --port 6301 --address 192.168.102.2 --libvirt-uri qemu+ssh://192.168.254.100/system

[root@kni-bastion ~]# vbmc list

+--------------------+--------+---------------+------+

| Domain name | Status | Address | Port |

+--------------------+--------+---------------+------+

| openshift-master-0 | down | 192.168.102.2 | 6301 |

+--------------------+--------+---------------+------+

[root@kni-bastion ~]# vbmc start openshift-master-0

[root@kni-bastion ~]# vbmc list

+--------------------+---------+---------------+------+

| Domain name | Status | Address | Port |

+--------------------+---------+---------------+------+

| openshift-master-0 | running | 192.168.102.2 | 6301 |

+--------------------+---------+---------------+------+

[root@kni-bastion ~]# ipmitool -I lanplus -U admin -P password -H 192.168.102.2 -p 6301 power status

Chassis Power is off

6. Add the rest of the VMs:

[root@kni-bastion ~]# vbmc add openshift-master-1 --username admin --password password --port 6302 --address 192.168.102.2 --libvirt-uri qemu+ssh://192.168.254.100/system

[root@kni-bastion ~]# vbmc add openshift-master-2 --username admin --password password --port 6303 --address 192.168.102.2 --libvirt-uri qemu+ssh://192.168.254.100/system

[root@kni-bastion ~]# vbmc add openshift-worker-0 --username admin --password password --port 6304 --address 192.168.102.2 --libvirt-uri qemu+ssh://192.168.254.100/system

[root@kni-bastion ~]# vbmc add openshift-worker-1 --username admin --password password --port 6305 --address 192.168.102.2 --libvirt-uri qemu+ssh://192.168.254.100/system

[root@kni-bastion ~]# vbmc list

+--------------------+---------+---------------+------+

| Domain name | Status | Address | Port |

+--------------------+---------+---------------+------+

| openshift-master-0 | running | 192.168.102.2 | 6301 |

| openshift-master-1 | down | 192.168.102.2 | 6302 |

| openshift-master-2 | down | 192.168.102.2 | 6303 |

| openshift-worker-0 | down | 192.168.102.2 | 6304 |

| openshift-worker-1 | down | 192.168.102.2 | 6305 |

+--------------------+---------+---------------+------+

[root@kni-bastion ~]# vbmc start openshift-master-1 openshift-master-2 openshift-worker-0 openshift-worker-1

[root@kni-bastion ~]# vbmc list

+--------------------+---------+---------------+------+

| Domain name | Status | Address | Port |

+--------------------+---------+---------------+------+

| openshift-master-0 | running | 192.168.102.2 | 6301 |

| openshift-master-1 | running | 192.168.102.2 | 6302 |

| openshift-master-2 | running | 192.168.102.2 | 6303 |

| openshift-worker-0 | running | 192.168.102.2 | 6304 |

| openshift-worker-1 | running | 192.168.102.2 | 6305 |

+--------------------+---------+---------------+------+

7. Allow firewall rules for VMBC UDP ports:

[root@kni-bastion ~]# firewall-cmd --add-port=6301-6305/udp --permanent

[root@kni-bastion ~]# firewall-cmd --reloadProvisioning Node Configuration

Ensure this Provisioner node has been registered to the RHSM and properly subscribed.

Provisioner Node: Environment Setup

1. Create user ‘kni’ and configure it as passwordless sudoers.

[root@provisioner ~]# useradd kni

[root@provisioner ~]# passwd kni

Changing password for user kni.

New password:

BAD PASSWORD: The password fails the dictionary check - it is based on a dictionary word

Retype new password:

passwd: all authentication tokens updated successfully.

[root@provisioner ~]# echo "kni ALL=(root) NOPASSWD:ALL" | tee -a /etc/sudoers.d/kni

kni ALL=(root) NOPASSWD:ALL

[root@provisioner ~]# chmod 0440 /etc/sudoers.d/kni

[root@provisioner ~]# su - kni -c "ssh-keygen -t rsa -f /home/kni/.ssh/id_rsa -N ''"

2. Install necessary packages:

[root@provisioner ~]# su - kni

[kni@provisioner ~]$ sudo dnf install -y libvirt qemu-kvm mkisofs python3-devel jq ipmitool tar3. Add kni user into libvirt group.

[kni@provisioner ~]$ sudo usermod --append --groups libvirt kni4. Allow firewalld rule for HTTP port for RHCOS Image cache server:

[kni@provisioner ~]$ sudo firewall-cmd --zone=public --add-service=http --permanent

success

[kni@provisioner ~]$ sudo firewall-cmd --reload

success5. Start and enable nested libvirtd on provisioner node:

[kni@provisioner ~]$ sudo systemctl enable libvirtd --now

[kni@provisioner ~]$ sudo systemctl is-active libvirtd

active6. Configure libvirt storage pool for bootstrap VM:

[kni@provisioner ~]$ sudo virsh pool-define-as --name default --type dir --target /var/lib/libvirt/images

Pool default defined

[kni@provisioner ~]$ sudo virsh pool-start default

Pool default started

[kni@provisioner ~]$ sudo virsh pool-autostart default

Pool default marked as autostartedProvisioner Node: Networking Setup

NOTE: Run this command from graphic or serial console. They will be some network interruption during configuration. You may used 3rd interface apart from this “baremetal” and “provisioning” bridge interface to avoid interruption using SSH terminal.

1. Configure “baremetal” networking bridge:

[kni@provisioner ~]$ nmcli con down enp2s0

[kni@provisioner ~]$ nmcli con delete enp2s0

[kni@provisioner ~]$ nmcli con add ifname baremetal type bridge con-name baremetal autoconnect yes ipv4.method auto

[kni@provisioner ~]$ nmcli con add type bridge-slave ifname enp2s0 master baremetal

[kni@provisioner ~]$ nmcli con reload

[kni@provisioner ~]$ nmcli con down baremetal

[kni@provisioner ~]$ nmcli con up baremetal2. We can continue to use SSH connection if baremetal interface already up from this point.

[root@provisioner ~]# nmcli con delete enp1s0

[root@provisioner ~]# nmcli con add ifname provisioning type bridge con-name provisioning

[root@provisioner ~]# nmcli con add type bridge-slave ifname enp1s0 master provisioning

[root@provisioner ~]# nmcli con modify provisioning ipv4.addresses 172.22.0.1/24 ipv4.method manual

[root@provisioner ~]# nmcli con down provisioning

[root@provisioner ~]# nmcli con up provisioning

Provisoner Node: Prepare RHOCP binaries

1. Ensure the pull-secret.txt available on the provisioner node. Get this from https://cloud.redhat.com.

[kni@provisioner ~]$ cat pull-secret.txt2. We are going to use version 4.5.13. Other release can be found at https://openshift-release.apps.ci.l2s4.p1.openshiftapps.com/. Now lets get the RELEASE_IMAGE URL.

[kni@provisioner ~]$ export VERSION="4.5.13"

[kni@provisioner ~]$ export RELEASE_IMAGE=$(curl -s https://mirror.openshift.com/pub/openshift-v4/clients/ocp/$VERSION/release.txt | grep 'Pull From: quay.io' | awk -F ' ' '{print$3}' )

[kni@provisioner ~]$ echo $RELEASE_IMAGE

quay.io/openshift-release-dev/ocp-release@sha256:8d104847fc2371a983f7cb01c7c0a3ab35b7381d6bf7ce355d9b32a08c0031f0

[kni@provisioner ~]$3. Extract the baremetal installer:

[kni@provisioner ~]$ export cmd=openshift-baremetal-install

[kni@provisioner ~]$ export pullsecret_file=~/pull-secret.txt

[kni@provisioner ~]$ export extract_dir=$(pwd)

[kni@provisioner ~]$ curl -s https://mirror.openshift.com/pub/openshift-v4/clients/ocp/$VERSION/openshift-client-linux-$VERSION.tar.gz | tar zxvf - oc

[kni@provisioner ~]$ sudo cp oc /usr/local/bin

[kni@provisioner ~]$ oc adm release extract --registry-config "${pullsecret_file}" --command=$cmd --to "${extract_dir}" ${RELEASE_IMAGE}

[kni@provisioner ~]$ ls -rlt

total 439588

-rwxr-xr-x. 1 kni kni 78599240 Sep 16 23:27 oc

-rwxr-xr-x. 1 kni kni 371528960 Sep 18 16:59 openshift-baremetal-install

-rw-rw-r--. 1 kni kni 2739 Oct 14 17:01 pull-secret.txt

[kni@provisioner ~]$

[kni@provisioner ~] sudo cp openshift-baremetal-install /usr/local/bin/Provisoner Node: RHCOS Image Cache

1. This is optional step, we want to make local cache for RHCOS images to save downloading time.

2. Install necessary packages:

[kni@provisioner ~]$ sudo dnf install -y podman policycoreutils-python-utils

3. Allow firewall port 8080, we are going to host it on port 8080:

[kni@provisioner ~]$ sudo firewall-cmd --add-port=8080/tcp --zone=public --permanent

success

[kni@provisioner ~]$ sudo firewall-cmd --reload

success4. Configure the web content directory:

[kni@provisioner ~]$ mkdir /home/kni/rhcos_image_cache

[kni@provisioner ~]$ sudo semanage fcontext -a -t httpd_sys_content_t "/home/kni/rhcos_image_cache(/.*)?"

[kni@provisioner ~]$ sudo restorecon -Rv rhcos_image_cache/

Relabeled /home/kni/rhcos_image_cache from unconfined_u:object_r:user_home_t:s0 to unconfined_u:object_r:httpd_sys_content_t:s0

[kni@provisioner ~]$ 5. Get the RHCOS images details:

[kni@provisioner ~]$ export COMMIT_ID=$(/usr/local/bin/openshift-baremetal-install version | grep '^built from commit' | awk '{print $4}')

[kni@provisioner ~]$ echo $COMMIT_ID

9893a482f310ee72089872f1a4caea3dbec34f28

[kni@provisioner ~]$

[kni@provisioner ~]$ export RHCOS_OPENSTACK_URI=$(curl -s -S https://raw.githubusercontent.com/openshift/installer/$COMMIT_ID/data/data/rhcos.json | jq .images.openstack.path | sed 's/"//g')

[kni@provisioner ~]$ echo $RHCOS_OPENSTACK_URI

rhcos-45.82.202008010929-0-openstack.x86_64.qcow2.gz

[kni@provisioner ~]$

[kni@provisioner ~]$ export RHCOS_QEMU_URI=$(curl -s -S https://raw.githubusercontent.com/openshift/installer/$COMMIT_ID/data/data/rhcos.json | jq .images.qemu.path | sed 's/"//g')

[kni@provisioner ~]$ echo $RHCOS_QEMU_URI

rhcos-45.82.202008010929-0-qemu.x86_64.qcow2.gz

[kni@provisioner ~]$ export RHCOS_PATH=$(curl -s -S https://raw.githubusercontent.com/openshift/installer/$COMMIT_ID/data/data/rhcos.json | jq .baseURI | sed 's/"//g')

[kni@provisioner ~]$ echo $RHCOS_PATH

https://releases-art-rhcos.svc.ci.openshift.org/art/storage/releases/rhcos-4.5/45.82.202008010929-0/x86_64/

[kni@provisioner ~]$ export RHCOS_QEMU_SHA_UNCOMPRESSED=$(curl -s -S https://raw.githubusercontent.com/openshift/installer/$COMMIT_ID/data/data/rhcos.json | jq -r '.images.qemu["uncompressed-sha256"]')

[kni@provisioner ~]$ echo $RHCOS_QEMU_SHA_UNCOMPRESSED

c9e2698d0f3bcc48b7c66d7db901266abf27ebd7474b6719992de2d8db96995a

[kni@provisioner ~]$ export RHCOS_OPENSTACK_SHA_COMPRESSED=$(curl -s -S https://raw.githubusercontent.com/openshift/installer/$COMMIT_ID/data/data/rhcos.json | jq -r '.images.openstack.sha256')

[kni@provisioner ~]$ echo $RHCOS_OPENSTACK_SHA_COMPRESSED

359e7c3560fdd91e64cd0d8df6a172722b10e777aef38673af6246f14838ab1a

5. Now download those images:

[kni@provisioner ~]$ curl -L ${RHCOS_PATH}${RHCOS_QEMU_URI} -o /home/kni/rhcos_image_cache/${RHCOS_QEMU_URI}

[kni@provisioner ~]$ curl -L ${RHCOS_PATH}${RHCOS_OPENSTACK_URI} -o /home/kni/rhcos_image_cache/${RHCOS_OPENSTACK_URI}6. Run httpd container hosting the content directory using podman:

[kni@provisioner ~]$ podman run -d --name rhcos_image_cache \

-v /home/kni/rhcos_image_cache:/var/www/html \

-p 8080:8080/tcp \

registry.centos.org/centos/httpd-24-centos7:latest

[kni@provisioner rhcos_image_cache]$ curl -I http://192.168.102.102:8080/rhcos-45.82.202008010929-0-openstack.x86_64.qcow2.gz

HTTP/1.1 200 OK

Date: Wed, 14 Oct 2020 09:43:03 GMT

Server: Apache/2.4.34 (Red Hat) OpenSSL/1.0.2k-fips

Last-Modified: Wed, 14 Oct 2020 09:28:16 GMT

ETag: "357388a6-5b19e26c1c700"

Accept-Ranges: bytes

Content-Length: 896764070

Content-Type: application/x-gzip

Provisioner Node: RHOCP Baremetal IPI Installation

1. Prepare install-config.yaml:

NOTE: Details on the parameters can be found at https://openshift-kni.github.io/baremetal-deploy/4.5/Deployment.html#additional-install-config-parameters_ipi-install-prerequisites.

[kni@provisioner ~]$ cat install-config.yaml

apiVersion: v1

baseDomain: bytewise.my

metadata:

name: kni

networking:

machineCIDR: 192.168.102.0/24

compute:

- name: worker

replicas: 2

controlPlane:

name: master

replicas: 3

platform:

baremetal: {}

platform:

baremetal:

provisioningNetworkInterface: enp1s0

provisioningDHCPRange: 172.22.0.20,172.22.0.80

provisioningNetworkCIDR: 172.22.0.0/24

bootstrapOSImage: http://192.168.102.102:8080/rhcos-45.82.202008010929-0-qemu.x86_64.qcow2.gz?sha256=c9e2698d0f3bcc48b7c66d7db901266abf27ebd7474b6719992de2d8db96995a

clusterOSImage: http://192.168.102.102:8080/rhcos-45.82.202008010929-0-openstack.x86_64.qcow2.gz?sha256=359e7c3560fdd91e64cd0d8df6a172722b10e777aef38673af6246f14838ab1a

apiVIP: 192.168.102.108

ingressVIP: 192.168.102.109

dnsVIP: 192.168.102.110

hosts:

- name: openshift-master-0

role: master

bmc:

address: ipmi://192.168.102.2:6301

username: admin

password: password

bootMACAddress: 52:54:00:d4:d4:37

hardwareProfile: libvirt

- name: openshift-master-1

role: master

bmc:

address: ipmi://192.168.102.2:6302

username: admin

password: password

bootMACAddress: 52:54:00:cb:88:27

hardwareProfile: libvirt

- name: openshift-master-2

role: master

bmc:

address: ipmi://192.168.102.2:6303

username: admin

password: password

bootMACAddress: 52:54:00:3f:fb:1c

hardwareProfile: libvirt

- name: openshift-worker-0

role: worker

bmc:

address: ipmi://192.168.102.2:6304

username: admin

password: password

bootMACAddress: 52:54:00:9a:45:68

hardwareProfile: libvirt

- name: openshift-worker-1

role: worker

bmc:

address: ipmi://192.168.102.2:6305

username: admin

password: password

bootMACAddress: 52:54:00:99:fc:52

hardwareProfile: libvirt

pullSecret: '{...}'

sshKey: '...'2. Create installation directory and copy install-config.yaml into it:

[kni@provisioner ~]$ mkdir clusterconfigs

[kni@provisioner ~]$ cp install-config.yaml clusterconfigs/

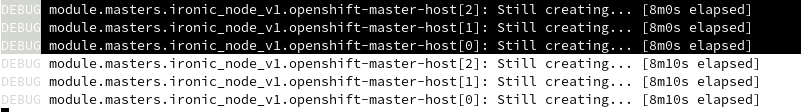

3. Execute the installer to create the cluster:

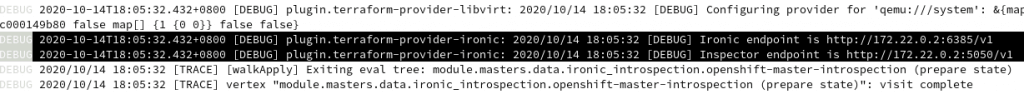

[kni@provisioner ~]$ export TF_LOG=TRACE

[kni@provisioner ~]$ openshift-baremetal-install --dir ~/clusterconfigs --log-level debug create cluster

High Level Bootstrapping Process

- Created a bootstrap VM on provisioner node. (It will take sometime for this VM to boot)

- Bootstrap VM will starts below containers to help with the bootstrapping:

- initial etcd

- dnsmasq

- ironic-api

- ironic-inspector

- ironic-conductor

- httpd

- mariadb

3. At the same time, the installer keep connecting to the bootstrap ironic API endpoint, and it will succeed once ironic API is ready and RHOCP node will be powered on and continue the kickstart process.

4. Next, installer will continue to provision the cluster similar to other IPI methodology.

5. Now on the provisioner node, we can use ‘oc’ client to see the cluster installation progress:

[kni@provisioner clusterconfigs]$ export KUBECONFIG=/home/kni/clusterconfigs/auth/kubeconfig

[kni@provisioner clusterconfigs]$ oc get co

NAME VERSION AVAILABLE PROGRESSING DEGRADED SINCE

authentication Unknown Unknown False 36s

cloud-credential True False False 15m

cluster-autoscaler

config-operator

console

csi-snapshot-controller

dns

etcd 4.5.13 False True False 29s

image-registry

ingress

insights

kube-apiserver False False False 34s

kube-controller-manager False True False 36s

kube-scheduler False True False 36s

kube-storage-version-migrator 4.5.13 False False False 37s

machine-api

machine-approver

machine-config True

marketplace

monitoring

network 4.5.13 True True False 36s

node-tuning 4.5.13 True False False 32s

openshift-apiserver 4.5.13 False False False 34s

openshift-controller-manager False True False 31s

openshift-samples

operator-lifecycle-manager

operator-lifecycle-manager-catalog

operator-lifecycle-manager-packageserver

service-ca 4.5.13 True False False 30s

storage

[kni@provisioner clusterconfigs]$ oc get nodes

NAME STATUS ROLES AGE VERSION

openshift-master-0.kni.bytewise.my NotReady master 46s v1.18.3+47c0e71

openshift-master-1.kni.bytewise.my NotReady master 51s v1.18.3+47c0e71

openshift-master-2.kni.bytewise.my NotReady master 76s v1.18.3+47c0e71

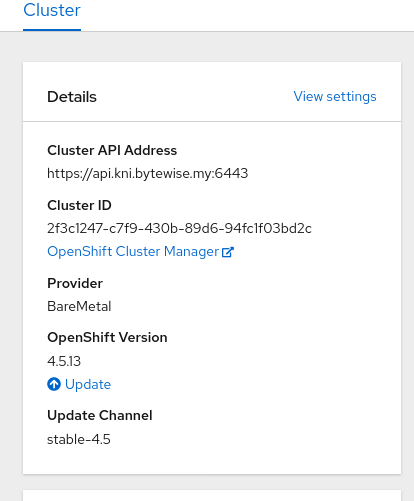

[kni@provisioner clusterconfigs]$ 6. Finally verify the cluster is properly bootstrapped, bootstrap VM will be deleted from the provisioner node:

[kni@provisioner ~]$ oc get co

NAME VERSION AVAILABLE PROGRESSING DEGRADED SINCE

authentication 4.5.13 True False False 17m

cloud-credential 4.5.13 True False False 3h1m

cluster-autoscaler 4.5.13 True False False 145m

config-operator 4.5.13 True False False 146m

console 4.5.13 True False False 135m

csi-snapshot-controller 4.5.13 True False False 9m48s

dns 4.5.13 True False False 165m

etcd 4.5.13 True False False 165m

image-registry 4.5.13 True False False 146m

ingress 4.5.13 True False False 17m

insights 4.5.13 True False False 161m

kube-apiserver 4.5.13 True False False 164m

kube-controller-manager 4.5.13 True False False 164m

kube-scheduler 4.5.13 True False False 164m

kube-storage-version-migrator 4.5.13 True False False 14m

machine-api 4.5.13 True False False 152m

machine-approver 4.5.13 True False False 163m

machine-config 4.5.13 True False False 13m

marketplace 4.5.13 True False False 8m15s

monitoring 4.5.13 True False False 8m15s

network 4.5.13 True False False 166m

node-tuning 4.5.13 True False False 166m

openshift-apiserver 4.5.13 True False False 10m

openshift-controller-manager 4.5.13 True False False 160m

openshift-samples 4.5.13 True False False 145m

operator-lifecycle-manager 4.5.13 True False False 165m

operator-lifecycle-manager-catalog 4.5.13 True False False 165m

operator-lifecycle-manager-packageserver 4.5.13 True False False 161m

service-ca 4.5.13 True False False 166m

storage 4.5.13 True False False 159m

[kni@provisioner ~]$ oc get nodes

NAME STATUS ROLES AGE VERSION

openshift-master-0.kni.bytewise.my Ready master 168m v1.18.3+47c0e71

openshift-master-1.kni.bytewise.my Ready master 168m v1.18.3+47c0e71

openshift-master-2.kni.bytewise.my Ready master 168m v1.18.3+47c0e71

openshift-worker-0.kni.bytewise.my Ready worker 19m v1.18.3+47c0e71

openshift-worker-1.kni.bytewise.my Ready worker 19m v1.18.3+47c0e71

[kni@provisioner ~]$

[kni@provisioner ~]$ oc get baremetalhosts.metal3.io -n openshift-machine-api

NAME STATUS PROVISIONING STATUS CONSUMER BMC HARDWARE PROFILE ONLINE ERROR

openshift-master-0 OK externally provisioned kni-master-0 ipmi://192.168.102.2:6301 true

openshift-master-1 OK externally provisioned kni-master-1 ipmi://192.168.102.2:6302 true

openshift-master-2 OK externally provisioned kni-master-2 ipmi://192.168.102.2:6303 true

openshift-worker-0 OK provisioned kni-worker-0-v8lfw ipmi://192.168.102.2:6304 libvirt true

openshift-worker-1 OK provisioned kni-worker-0-k6k2s ipmi://192.168.102.2:6305 libvirt true

[kni@provisioner ~]$

References

- https://openshift-kni.github.io/baremetal-deploy/4.5/Deployment.html

- https://github.com/williamcaban/ocp4-bm-ipi

Disclaimer:

The views expressed and the content shared in all published articles on this website are solely those of the respective authors, and they do not necessarily reflect the views of the author’s employer or the techbeatly platform. We strive to ensure the accuracy and validity of the content published on our website. However, we cannot guarantee the absolute correctness or completeness of the information provided. It is the responsibility of the readers and users of this website to verify the accuracy and appropriateness of any information or opinions expressed within the articles. If you come across any content that you believe to be incorrect or invalid, please contact us immediately so that we can address the issue promptly.

Tags:

Comments

Leave a Reply